I am writing the data series on a daily basis

1 year we will have 365 data stories for reading ^^

Cosine !! What you are thinking about ?

Can you remember that you learnt on secondary school year 4 !?

So…. What is it !? Trigonometry !!

Sin(x), Cosine(x), Tan(x)

Is that’s right !?

On the day 4.

I am talking about Minkowski Distance

Have you remenbered Euclidean distance

and how to calculate a height of building

by using Trigonometry

Yes ! today I am talking about one of a measure function

called Cosine Similarity

If you can remember

This Euclidean distance and that Trigonometry

Let’s go to see how Cosine Similarity work !>>>

If not, Don’t worry about it

You can learn more on day 4

I provide on the link below

Link: https://bigdatarpg.com/2021/01/09/day-04-minkowski-distance/

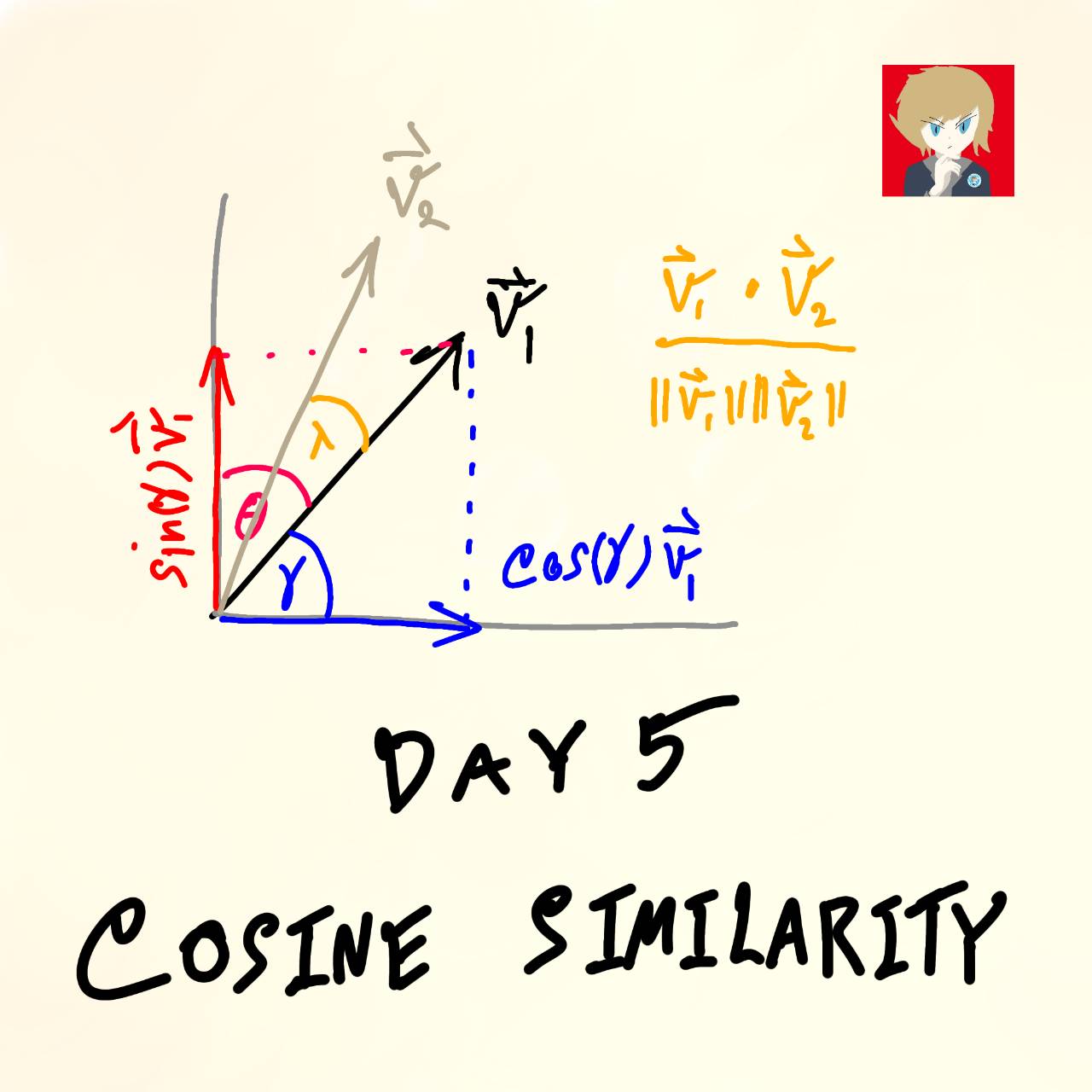

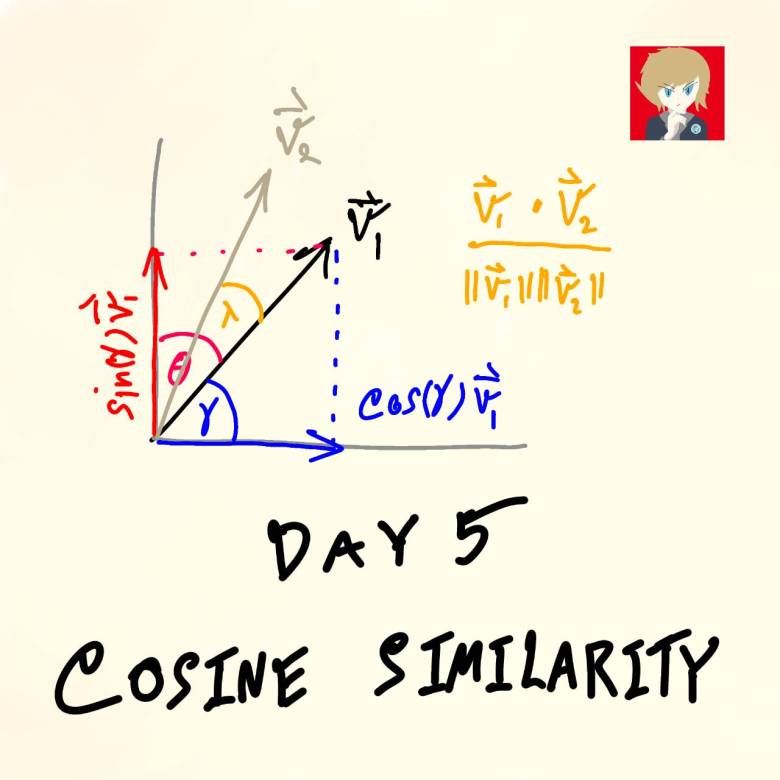

Cosine Similarity

Who used to use it before, Raise your hands up ^^ !!

The easy way to explain this system is

the measure that compare similarity of 2 vectors

by looking at angle between 2 vectors !

Ok That’s means this system require 2 vectors

Lets’ example

If there are 3 vectors

vector A, vector B, and vector C

(the result range of cosine similarity is between [0, 1]In some case has minus that’s means opppsite)

Check how similar

A and B = 0.5

A and C = 0.2

B and C = 0.7

Which pair is the most similar and

which one is the most not similar ?

OK Let’s see how to interprete !!

If result show that equal 1

that’s means 2 vectors are in the same line

they have 0 degree angle with together

If result show that equal 0

that’s means 2 vectors are not in the same line

they have 90 degree angle with together

If result show that equal -1

that’s means 2 vectors are in the same line

but they are 180 degree angle with together

one vector is the oppsite with another vector

Back to the result and question

Which pair is the most similar and

which one is the most not similar ?

So we get

B and C = 0.7 Very similar

A and B = 0.5 Similar but not much !

A and C = 0.2 That is not quite similarIs it easy Right !? ![]()

Calculate Cosine Similarity

Let’s see the example

If we have 3 customer

customer A, B, C

and each customer has 3 Features

Feature location

Feature education

Feature flag active customer

customer A = [Bangkok, Undergraduate, Y]

customer B = [Nonthaburi, Undergraduate, Y]

customer C = [Bangkok, Master, N]

We have to transform data from text to numerical

If we set Bangkok = 0, Nonthaburi = 1

Undergraduate = 0, Master = 1

N = 0, Y = 1

Now we have

customer A = [0, 0, 1]

customer B = [1, 0, 1]

customer C = [0, 1, 0]

Let’s see Features

It look like axis location as x-axis

education as y-axis

flag active customer as z-axis

combine into 1 vector of 1 customer

From Cosine Similarity

Sim(A,B) = Cos(degree) = (A dot B) / (||A|| * ||B||)

where

||A|| is Euclidean norm

||A|| = sqrt(x1**2 + x2**2 + … + xn**2)

Let’s calculate Euclidean distance

Euclidean distance(A, B) = sqrt(0**2 + 0**2 + 1**2) * sqrt(1**2 + 0**2 + 1**2)

Euclidean distance(A, C) = sqrt(0**2 + 0**2 + 1**2) * sqrt(0**2 + 1**2 + 0**2)

Euclidean distance(B, C) = sqrt(1**2 + 0**2 + 1**2) * sqrt(0**2 + 1**2 + 0**2)

Euclidean distance(A, B) = 1.4142

Euclidean distance(A, C) = 1.0000

Euclidean distance(B, C) = 1.4142

Let’s calculate dot product

What is dot product !???

Sum of product of each axis

So we have

A dot B = (0*1) + (0*0) + (1*1)

A dot C = (0*0) + (0*1) + (1*0)

B dot C = (0*0) + (0*1) + (1*0)

A dot B = 1

A dot C = 0

B dot C = 0

Combine them

Sim(A,B) = 1 / 1.4142

Sim(A,C) = 0 / 0.000

Sim(B,C) = 0 / 1.4142

Sim(A,B) = 0.707

Sim(A,C) = 0.000

Sim(B,C) = 0.000

As a result of Cosine Similarity

We found that

A and B = 0.707 Very similar

A and C = 0.000 It’s 90 deegree absolute different

B and C = 0.000 It’s 90 deegree absolute different

Um the result is quite not meaningful

Because we transform discrete data to numerical data

and we represent binary vector for customer

In this case

If our features has binary

Cosine Similarity can be rewrite to

A simple variation of cosine similarity

named Tanimoto distance

that is frequently used in information retrieval and biology taxonomy

For Tanimoto distance

instead of using Euclidean Norm

When we have binary vector

So we have

Sim(A,B) = (A dot B) / (A dot A) + (B dot B) – (A dot B)

Applications on Cosine Similarity

Example

– Clustering discrete data

– Check similarity of chemical molecule

– Clustering on continuous data

– Clustering customers

– Search documents by kewords

– Recommendation engine

– Check similarity of documents

– Search chatbot intent

– Check similarity of image

– Customer profiling

– Miscellaneous

Thank you my beloved fanpage

Please like share and comment

Made with Love ![]() by Boyd

by Boyd

This series is designed for everyone who are interested in dataor work in data field that are busy.Content may have swap between easy and hard.Combined with Coding, Math, Data, Business, and Misc- Do not hesitate to feedback me ![]()

– If some content wrong I have to say apologize in advance ![]()

– If you have experiences in this content, please kindly share to everyone ^^ ❤️

– Sorry for my poor grammar, I will practice more and more ![]()

– I am going to deliver more english content afterward ![]()

Follow me ![]()

Youtube: https://youtube.com/c/BigDataRPG

Fanpage: https://www.facebook.com/bigdatarpg/

Medium: https://www.medium.com/bigdataeng

Github: https://www.github.com/BigDataRPG

Kaggle: https://www.kaggle.com/boydbigdatarpg

Linkedin: https://www.linkedin.com/in/boyd-sorratat

Twitter: https://twitter.com/BoydSorratat

GoogleScholar: https://scholar.google.com/citations?user=9cIeYAgAAAAJ&hl=en